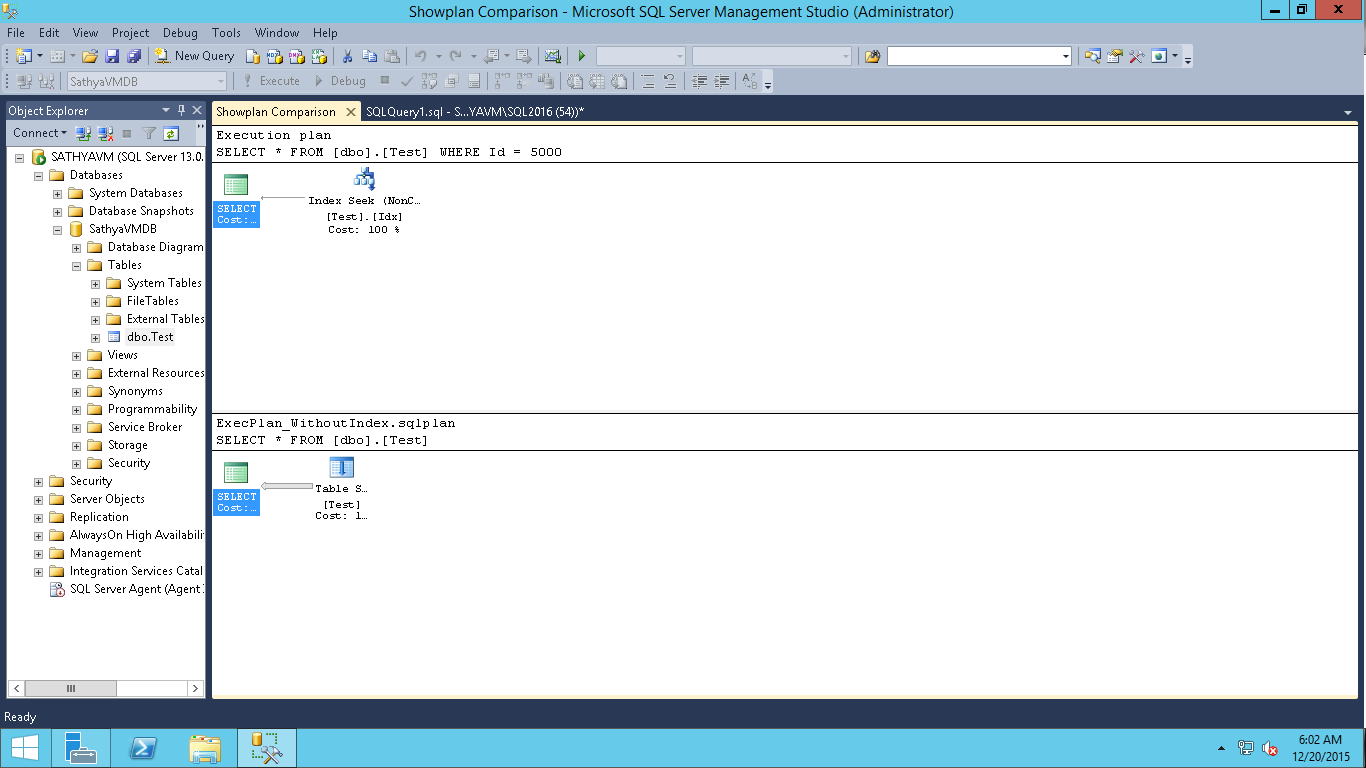

On the other hand, the list of authentication methods mentioned above includes an AAD Application. More DocumentationĪnother closer look on the KqlMagic git repo and we find this documentation about authentication methods on the repo:ĪAD Username/password – Provide your AAD username and password.ĪAD application – Provide your AAD tenant ID, AAD app ID and app secret.Ĭode – Provide only your AAD username, and authenticate yourself using a code, generated by ADAL.Ĭertificate – Provide your AAD tenant ID, AAD app ID, certificate and certificate-thumbprint (supported only with Azure Data Explorer)Īppid/appkey – Provide you application insight appid, and appkey (supported only with Application Insights)įinally, it’s just a matter of linking the dots: On the sample connection strings, the one which makes more sense mention an AppId inserted as the clientId key value, even against the advise that App Ids work only for the demo. I can ensure you: The solution is above, but it is still difficult to find. I tried to leave some parameters missing, but it didn’t work for me, KqlMagic always complains about the missing parameters.The connection string on the demo only works for the demo.The numbered items help to exclude the options that don’t work: (8) anonymous authentication, is NO authentication, for the case that your cluster is local. This is how you can parametrize the connection string (7) a not quoted value, is a python expression, that is evaluated and its result is used as the value. (6) if tenant is missing, and a previous connection was established the tenant will be inherited. (5) if secret (password / clientsecret) is missing, user will be prompted to provide it. (4) if credentials are missing, and a previous connection was established the credentials will be inherited. (2) username/password works only on corporate network. (1) authentication with appkey works only for the demo. % kql loganalytics : / / anonymous workspace = '' alias = '' If you ask help to KqlMagic itself, the information you get is the same on the notebook, and it doesn’t help to much when trying to connect to a workspace.The github repo has a Get Started With KqlMagic and Log Analytics which redirects you to an online executable notebook with some explanations about the connection string, but using the demo workspace.The article explaining how to do it only connects to a demo Log Analytics Workspace kept by Microsoft.What may appear easy in the beginning, is not at all. At the bare minimum, you will find some great links and ideas. However, I like to explain how I reached the solution and what didn’t work. If you are thirsty for the solution, you can skip this part and go to the solution a bit below on the blog. Yes, Azure Data Studio is so flexible it supports Python and we can install Python packages. However, with some help from Microsoft community on twitter I was able to find a work around: We can use KqlMagic, a Python package, to connect to Log Analytics.

Of course, you already noticed how I got disappointed by discovering Azure Data Studio couldn’t connect to Log Analytics. What do you think? Let’s talk about on the comments. It puzzles me that one receives more attention than the other. On the other hand, software houses creating custom solutions or, in some situations, solution providers trying to manage a big number of clients, may find Azure Data Explorer as a better solution for them. These numbers allow the IT Administrators to do in the cloud what only a few really manage in an organized way: Keep baselines and compare results after every environment change.

Log Analytics can be a fundamental tool on the process of cloud adoption, because it gives numbers to the process.

On the other hand, Azure Data Explorer is a database storage MPP (Massive Parallel Processing) solution for massive stream storage – logs – allowing anyone to create their own monitoring solution using it as a storage, but not a ready-to-use solution as Log Analytics.Ĭonsidering these differences, the use cases for Log Analytics seems related to migrations to the cloud and all the following monitoring work. Log Analytics is a ready-to-use monitoring solution for cloud and on-premises environment. All this huge focus on Azure Data Explorer given by Microsoft still puzzles me. However, Azure Data Studio only supports Kusto connections with Azure Data Explorer, not Log Analytics. That’s why Kusto was initially created for Azure Monitor and Azure Log Analytics. Kusto is a query language used for massive amounts of streamed data, perfect for logs. Recently, Azure Data Studio included the support to Kusto language, or KQL. Connecting to Log Analytics using Azure Data Studio and KQL Simple Talk Skip to contentĪzure Data Studio is a great tool and supports way more than only SQL.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed